In the first two weeks of this series, we advised you on how to consume news about AI and heard from journalism engineers on their concerns and hopes for AI and how it’ll change the way we work in newsrooms to better reach and engage our audiences. We learned that we don’t have to learn or know everything.

how to consume news about AI and heard from journalism engineers on their concerns and hopes for AI and how it’ll change the way we work in newsrooms to better reach and engage our audiences. We learned that we don’t have to learn or know everything.

This week, we’ll talk about the question that helped me build a presentation at Media Party this year: what should I do with AI, or any other emerging technology?

The answer is: nothing, if you don’t have a problem worth solving.

WHERE TO START

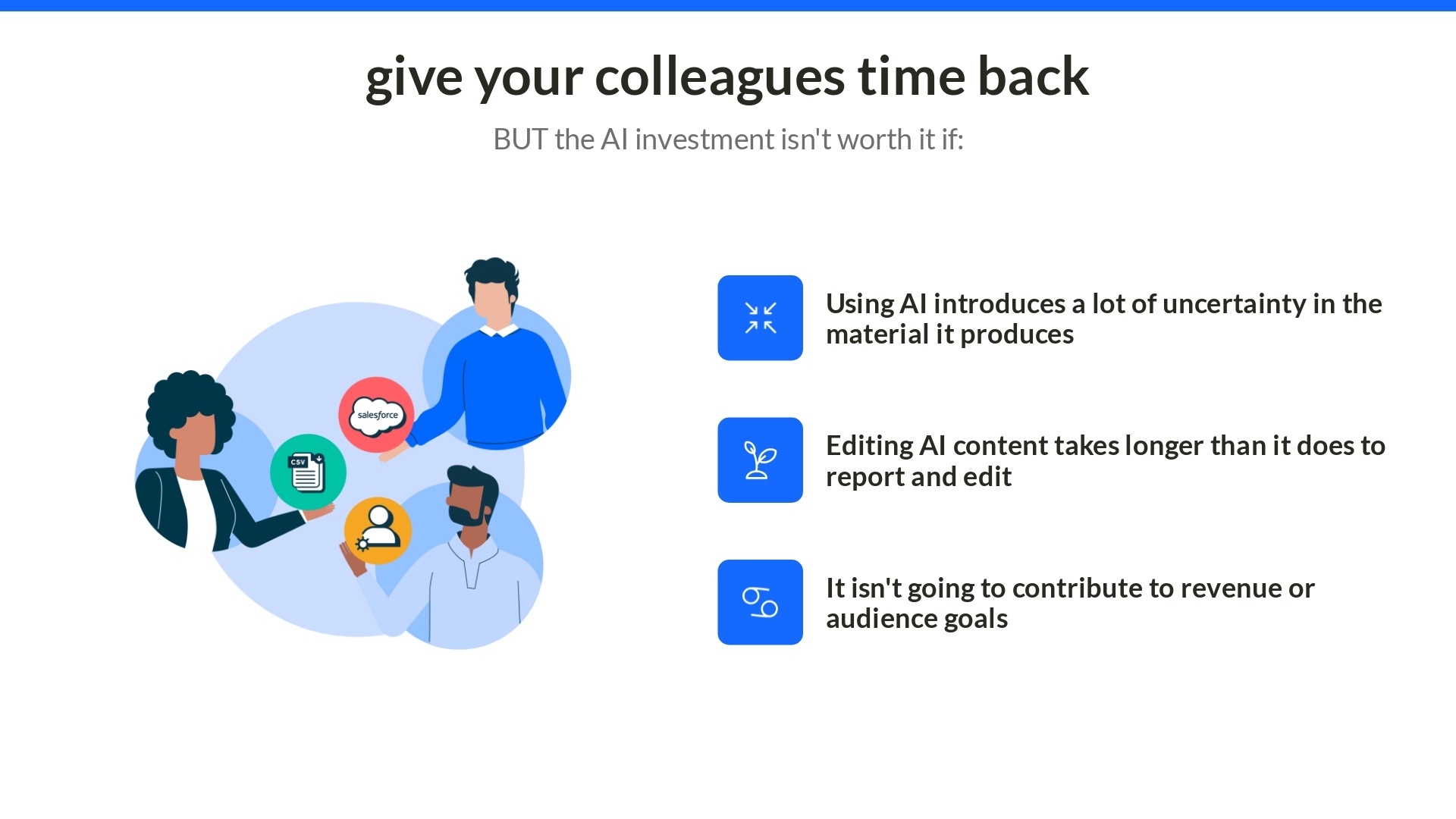

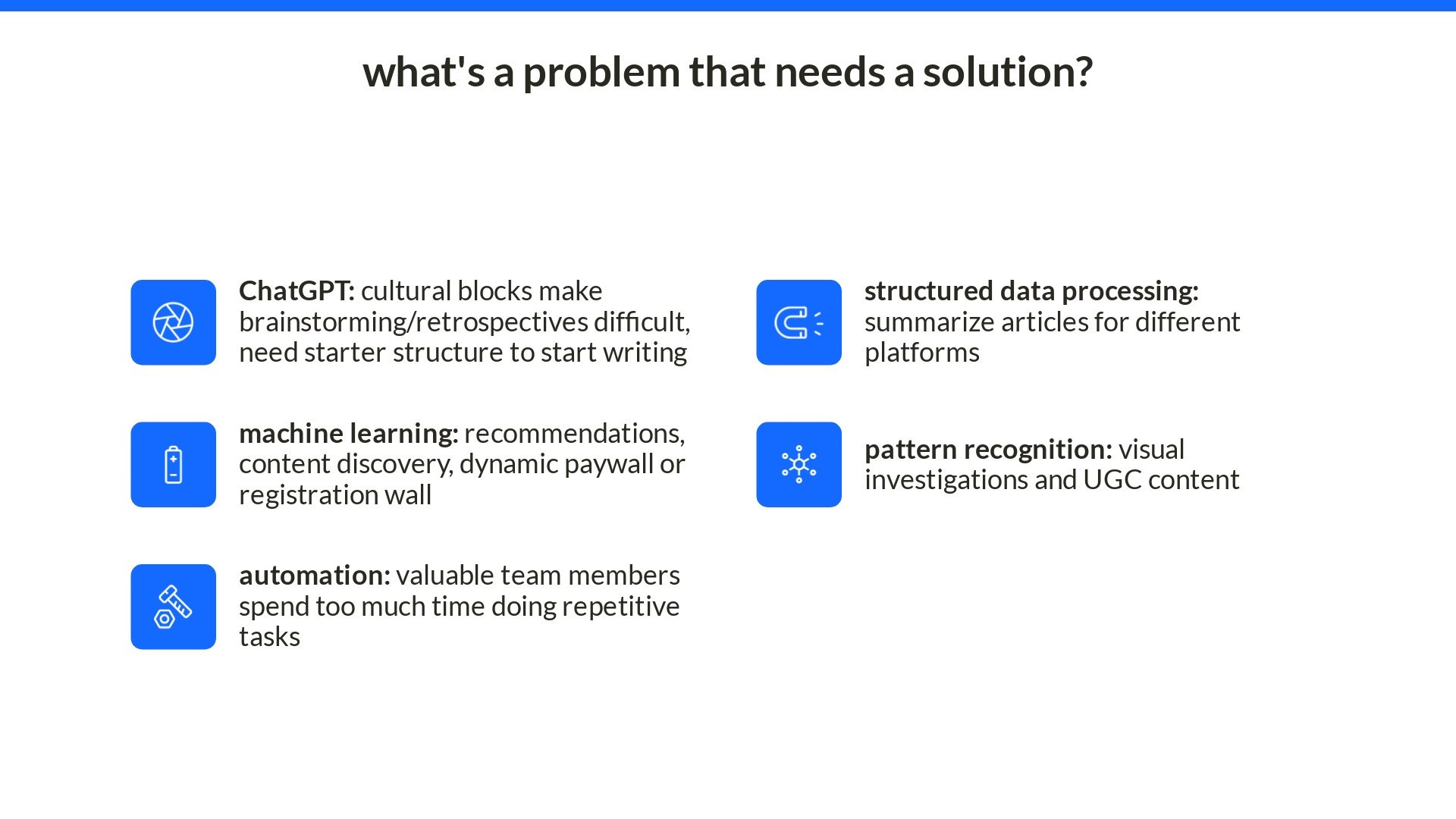

The tricky thing about working with emerging technologies that I’ve found over the years is that technologies are solutions looking for problems. Technology is a hammer, so its perspective is that everything is a nail that can be hammered. The difference is, AI doesn’t get to decide — you do, and you have a full toolkit of potential ways to fix problems. The key is to know what problems you have that are most important and most worth solving, and start to learn about new tools that might solve those problems, including AI. Without a problem worth solving, experimenting with AI or any other technology won’t be worth the time.

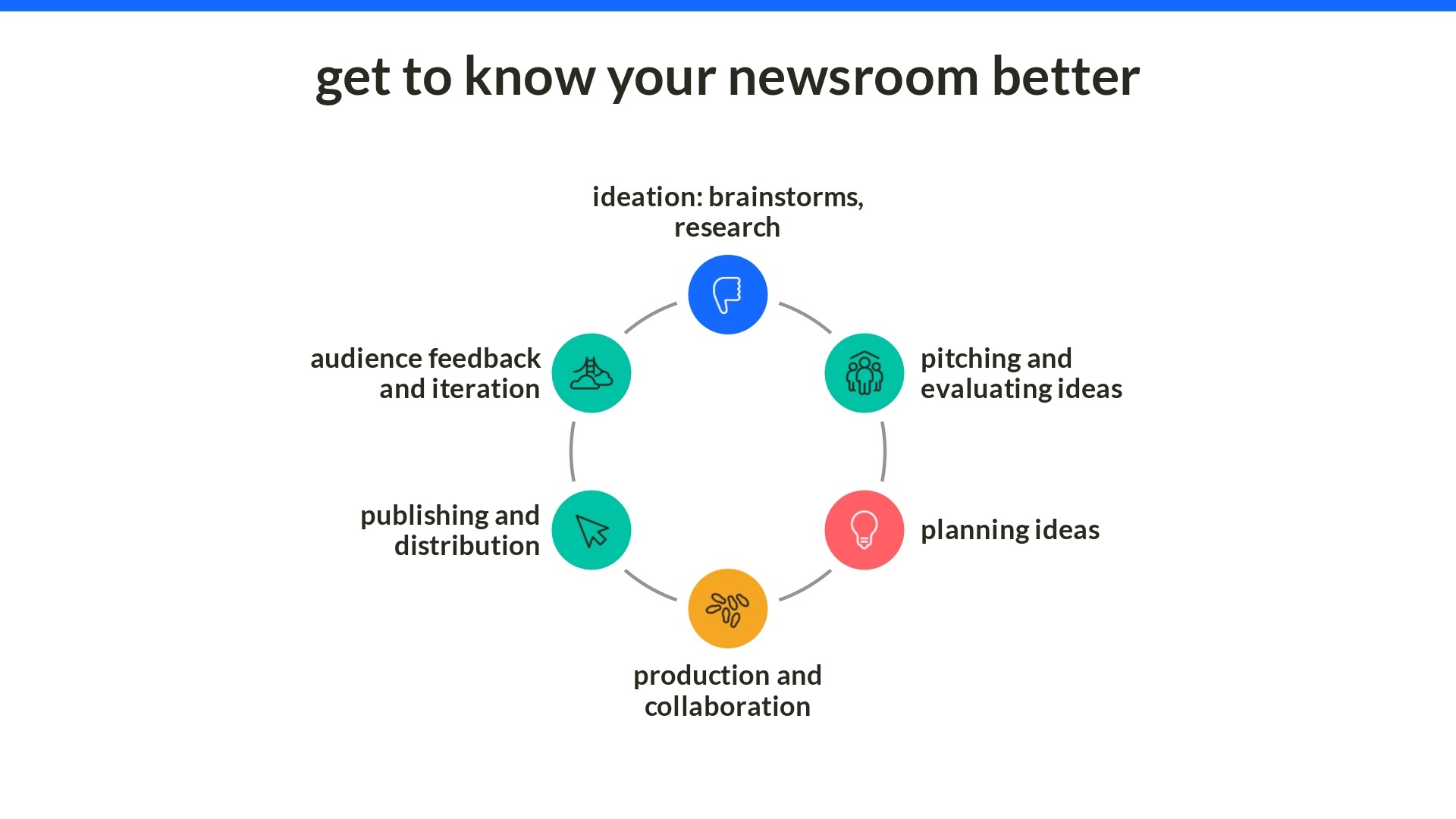

In my presentation, roughly 50 people were tasked with identifying where the biggest, most important problems worth solving existed in their newsroom. This is where you should start — know your newsroom well and the solutions will follow.

Where do the biggest operational problems exist in your newsroom? Identify a few from the production cycle and list them.

Arguably, one of the most neutral ways to experiment with generative AI is outside editorial content in business operations tasks. I’ve had to write job descriptions in any newsroom manager role I’ve had, and the benefit of using generative AI is leveraging the collective intelligence of the best job descriptions written and posted from companies on the internet. Without good editors in HR, which increasingly fewer of us have, this is a tool we can use to write more accessible and inclusive job descriptions for internships, fellowships, staff roles and as a foundation to grow our teams.

Beyond job descriptions, we discussed how ChatGPT could help start structured stories, including timely evergreen articles (e.g. publishing passport guidelines as supplemental to a news piece on TSA delays), as well as prompting brainstorms and retrospectives that leverage more best practices than the structure you might have used for a long time.

Beyond job descriptions, we discussed how ChatGPT could help start structured stories, including timely evergreen articles (e.g. publishing passport guidelines as supplemental to a news piece on TSA delays), as well as prompting brainstorms and retrospectives that leverage more best practices than the structure you might have used for a long time.

It’s also important to know what ChatGPT shouldn’t do in your newsroom. We know it’s not the tool to fact-check, provide unbiased content, manage embargoed or sensitive materials (since it’s no longer private when you put it into ChatGPT) or report original content without an editor.

If AI is the tool to solve a problem worth solving in your newsroom, by all means, dive in head first. I’ll leave you with these guidelines and an assignment for setting a responsible AI strategy when you take that leap. Best of luck!

Is your company looking to solve a problem using AI? As the person deciding the AI strategy in your newsroom, write a mission statement of intention that includes the following:

- How we’ll use AI in our work (behind the scenes or in our articles) and why we’re using it (audience, business or editorial goals)

- Where the AI-assisted or -generated content will appear onsite

- How you anticipate using AI will affect jobs

- If applicable, if AI is in talks with your newsroom union

Some good references can be found at AP and Wired.

Are you looking for help creating an AI strategy for your newsroom? To work with API on creating a clear, transparent and audience-centered AI strategy, reach out to elite.truong@pressinstitute.org with the subject line “AI Strategy.”