“Data and journalism have become deeply intertwined, with increased prominence,” journalist Alex Howard wrote in in a report for the Tow Center at Columbia University entitled “Debugging the backlash to data journalism.” “To make sense of the data deluge, journalists today need to be more numerate, technically literate and logical.”

These changes come to the industry’s great advantage, said Steve Doig, who teaches at the Cronkite School of Journalism at Arizona State University. Twenty years ago, Doig was a reporter at the Miami Herald, painstakingly looking for patterns in data stored on 9-track tapes.

“Data is not a stranger to journalism,” he said. Back in the 1960s and even earlier, before reporters had easy access to personal computers, they were doing computer-assisted reporting projects. The name of the practice has evolved — precision journalism, computer-assisted reporting, data-driven reporting. But “the key word in ‘data journalism’ is ‘journalism,’” Doig said in an interview.

The people who do this work stress that data projects begin and end with traditional journalism know-how: how to find a story, how to find impact and human interest, how to explain concepts to the public.

In his 2014, Alex Howard charted the history of data journalism and its rapid recent growth.

“There have been reporters going to libraries, agencies, city halls and courts to find public records about nursing homes, taxes, and campaign finance spending for decades,” he wrote. “The difference today is that in addition to digging through dusty file cabinets in court basements, they might be scraping a website, or pulling data from an API.”

Doig was early experimenter in computer-assisted reporting back when that phrase could be taken more literally: he did reporting assisted by computers when even computers were scarce, back in the 1980s. Data aficionados sometimes had to borrow computers from universities, he said.

When Hurricane Andrew roared through the Florida coast in 1992, Doig was a Miami Herald reporter who had been experimenting with SAS and census data on the Herald’s behemoth mainframe.

“When our reporters mentioned that the county was doing a house-by-house inventory of damage, I realized I could match that with the property tax roll and look for patterns,” he said. The property tax roll provided Doig with what he called “the one true smoking gun of my career”: the newer the home, the more likely it was to be destroyed in the storm.

This discovery led the Herald to investigate building code inspections — “literally millions” of them — showing inspectors carrying out, on paper, at least, an impossible 60 to 70 inspections a day.

From there, the reporters moved to campaign finance, finding that a huge chunk of contributions in Florida came from the construction industry. The paper had to hire a data punch house to transform 10 years of campaign finance data from paper to structured data.

At the end of all this work, all these floppy disks, all these piles of paper, came the 1993 Pulitzer Prize for Public Service, the Pulitzers’ highest award.

“A key part of the project was trying to get beyond the finger pointing that was going on,” Doig said. “The data allowed us to find that evidence.”

Today, Doig said, reporters can do that analysis with nothing but Internet access and free tools on a laptop. Not only that, but the public has come to expect it.

“To me, the real heart of learning [data reporting] is so that we can fulfill our watchdog function,” he said. “It’s a necessary part of journalism to be able to analyze data that particularly the government collects, and use it to basically measure whether the government is doing its job or not.”

Not only are journalists and the average public far more familiar with data now, but data has proliferated: computer technology and automation has created a world of accurate, recorded data that simply didn’t exist before.

Tools are easier, computers are faster, and “every day, millions and millions of rows of data are coming out of federal governments, state governments, city governments,” ProPublica editor Scott Klein said.

“It’s the journalist’s responsibility to help people make sense of that,” he continued. “I believe it’s a responsibility of local newsrooms to help people be empowered by all of this data.”

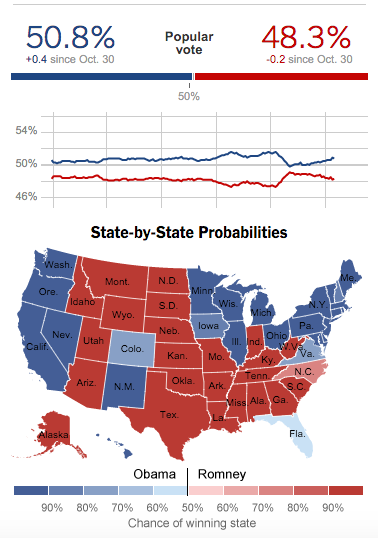

Credit: New York Times

Data journalism has also exploded in popularity in the last few years thanks to in part to work such as Nate Silver’s perfect prediction of the 2012 presidential election. At the same time, some data journalism pioneers still work in the field as editors or professors, carrying its foundations to a generation raised on computers.

Jacob Harris spent several years on a New York Times data team. He said the plethora of new tools and publication platforms make data journalism much easier, but they also make it easier to “start churning this stuff out without really thinking about it.”

Keeping step with the proliferation of data journalism in general, meritless data projects have been proliferating on the Internet: suspicious scientific surveys, for instance, or maps of fun facts.

“It’s super easy to put dots on a map at this point,” Harris said. The key ingredient that’s missing, as we will see in this paper, is journalism.

Share with your network

Diving into data journalism

You also might be interested in:

The study’s findings likely align with news engagement behavior you’re already noticing, but the data across age groups shows these shifts cannot be written off as a passing trend that younger generations will age out of. Here are four key takeaways and what they mean for local news.

This report draws on a nationally representative survey of teens ages 13–17 and adults 18 and older, providing one of the most comprehensive, generationally comparative looks at how Americans navigate an increasingly complex news, information and media ecosystem.

Local News Day highlights five ways these organizations matter within their communities. We’ve rounded up some of our favorite examples of this work in each category.